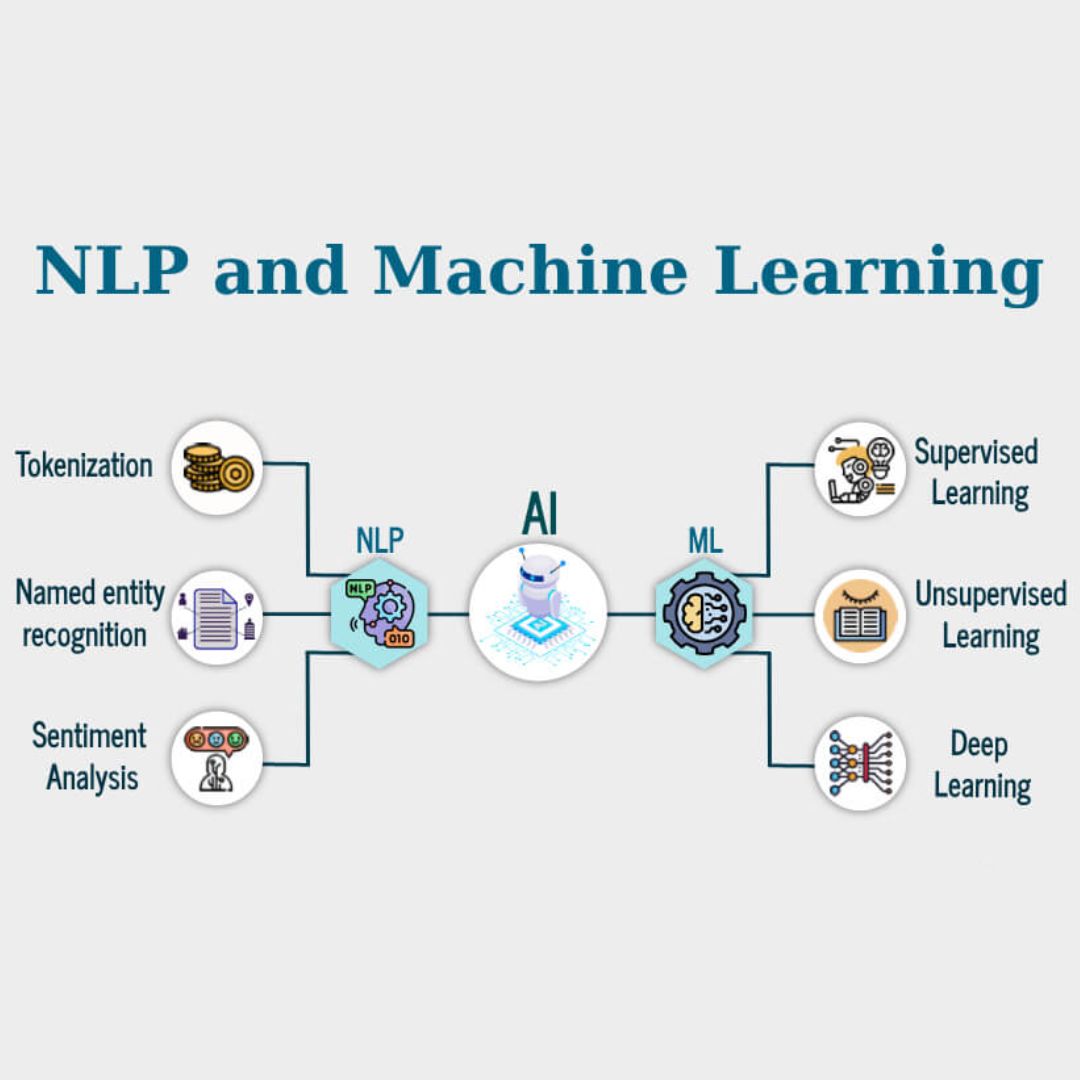

Machine Learning for NLP

Bridging the gap between classical Machine Learning and Text Analytics. Covers Preprocessing, TF-IDF, SVM, and MLP.

The **Machine Learning for NLP** course acts as the essential bridge between statistical modeling and modern language processing. Before diving into Transformers, it is crucial to master how classical algorithms like SVM and Naive Bayes handle unstructured text data.

Instructor: Zahra Amini

This repository provides a structured pipeline from raw text to predictive models, bridging the gap between “Text Analysis” and “Neural Networks”.

🚀 VIEW PROJECTS & CODE ON GITHUB

Course Syllabus

The curriculum is organized into logical modules, simulating a real-world NLP pipeline:

- Module 1: Text Wrangling & Cleaning

-

S00-S02: Tokenization, Stop-word removal, Stemming vs. Lemmatization using NLTK & Spacy.

-

- Module 2: Feature Extraction (Text to Numbers)

-

S03-S04: Implementing Bag of Words (BoW), TF-IDF, and N-Grams from scratch.

-

- Module 3: Probabilistic & Geometric Models

-

S07-S10: Text Classification using Logistic Regression, K-Nearest Neighbors (KNN), and Naive Bayes.

-

- Module 4: Advanced Classification

-

S11-S12: High-dimensional separation using Support Vector Machines (SVM) and LDA.

-

- Module 5: The Neural Shift

-

S13-S14: Introduction to Multi-Layer Perceptrons (MLP) for text data.

-